Thanks everyone for your patience these past two months. I had to learn and do a lot to bring you this first release so I hope you find the wait was worth it. Today I’m launching an alpha of my mod, imaginatively titled “ModVB”. Installation instructions here for how you can safely install it to use JSON Literals and Pattern Matching right now! (Spoiler: You install a VSIX and reference a NuGet; that’s it)

This post is about the new features included in “Wave 1”. These are not prototypes like in my previous videos. Prototypes are quickly implemented to demonstrate the utility of one or few narrow scenarios taking whatever shortcuts possible to get to something that “works” (enough to solicit feedback) as quickly as possible. But the functionality in my mod is done “The Right Way”™ and should be extremely stable (crashes or freezes should be rare; please report immediately) and pretty close to what could be the final design. Read more about them below.

I’ve talked about JSON literals in previous posts, so I’ll start off with the newest feature on the block, pattern matching.

Pattern Matching

With respect to JSON, pattern matching is essentially the opposite of a JSON literal. Whereas a literal makes it easy to construct a JSON object in a way that preserves and reflects the structure of the JSON you’re trying to create, pattern matching allows you to deconstruct JSON in a way that likewise preserves and reflects the JSON’s structure.

Patterns

As of the time of this writing, here are the patterns that are supported by the Select Case ShapeOf syntax:

In all cases, null-ness may be “runtime extension defined” (see “Runtime Extensions & API Compatibility” below). This just means the compiler calls a helper method to determine whether something is true or not rather than purely CLR semantics. This is necessary because, for example, a JValue object is not null but represents the concept of null in the JSON space. Likewise, a JsonElement structure isn’t nullable but may still represent a null value.

The JSON Object Pattern

{

"<name 1>": <pattern>,

"<name 2>": <pattern>,

...

}Matches a value if it: is not null, represents a JSON object (as defined by runtime extension), has the non-optional members named, and each member found matches its corresponding pattern, recursively.

This is evaluated top to bottom (depth first) so the entire first member and its pattern are evaluated before the second member is searched for.

This is not exhaustive; the JSON object may include more members than the ones listed and still match.

May be marked optional by placing a question mark ? after the closing brace. In which case, the pattern will also match a null value, i.e. the value is null OR matches the above criteria.

An individual name-value sub-pattern can also be marked optional:

Case {

"firstName": required,

"middleName"?: optional

}In this example pattern requires that a firstName be included in the JSON payload but the middleName may not be present at all. This is subtly distinct from being present but null. Some JSON producers may not include null values by default. This syntax provides an easy way of consuming JSON from these producers in a way that treats an omitted value the same as one that is explicitly null.

The Empty JSON Object Pattern

{}Matches a value if it: is not null, represents a JSON object, and that object has no members.

Unlike the non-empty JSON Object Pattern, this pattern is exhaustive.

May be marked optional by placing a question mark ? after the closing brace. In which case, the pattern will match a value that is null or is an empty JSON object.

The JSON Array Pattern

[

<pattern>,

<pattern>,

...

]Matches a value if it: is not null, represents a JSON array (as defined by runtime extension), that array has at least as many elements as listed, and each of the first n elements matches its corresponding pattern, recursively.

This is evaluated top to bottom (depth first) so the entire first element and its pattern are evaluated before the second element is searched for.

This is not exhaustive; the JSON array may include more elements than the ones listed and still match.

May be marked optional by placing a question mark ? after the closing bracket. In which case, the pattern will also match a null value, i.e. the value is null OR matches the above criteria.

The Empty JSON Array Pattern

[]Matches a value if it: is not null, represents a JSON array, and that array has no elements.

Unlike the non-empty JSON Array Pattern, this pattern is exhaustive.

May be marked optional by placing a question mark ? after the closing bracket. In which case, the pattern will match a value that is null or is an empty JSON array.

The Constant Value Pattern

<constant literal>This can be any constant literal in VB (including Date and Decimal values and Nothing) or the JSON constants true, false, or null. These JSON constants behave exactly as VB True, False, and Nothing respectively.

Matches a value if it (for constants other than Nothing or null): is not null, is convertible to the type of the constant, and after conversion has a value equal to that of the constant.

If constant is the literal Nothing or null, will match if the value is null.

May be marked optional by placing a question mark ? after the literal. In which case, the pattern will match a value that is null or equal to the constant.

The Variable Declaration Pattern

<identifier> As <Type>Captures a value in a variable. The As <Type> clause may be omitted.

Matches a value if it: is not null and is convertible to the specified type. If the type is omitted the pattern will match any non-null value and the type of the variable is inferred from the type of the value.

Convertibility considers reference conversions and intrinsic language conversions as well as runtime extension defined conversions. In the absence of one of these a conversion may also be attempted if the value represents a String and the specified type defines a TryParse method or the specified type is an Enum type, in which case the pattern will succeed if a call to TryParse succeeds.

May be marked optional by placing a question mark ? after the type. If the type is omitted, the question mark ? may be placed after the identifier. In either case the pattern will match all values null or otherwise.

A note on optional patterns

When a JSON object pattern, one of its name-value subpatterns, or a JSON array pattern is marked optional, any variable declaration patterns within are necessarily nullable; if the parent pattern matches a null value these variables will also be initialized to null.

More to come

More patterns will be added later, as well as an expression version of the ShapeOf operator.

Runtime Extensions & API Compatibility

Both pattern matching and JSON literals are pattern-based, in the same way as LINQ and the For Each loop; they aren’t tied to any particular library or set of types. Instead, the implementations of these features look for helper types in a special Extensions namespace, decorated with the correct attributes, and having methods with certain names and shapes. The indirectness of that sentence reflects the levels of indirection built into the compiler to achieve this flexibility. You don’t need to worry about any of that other than that you should reference particular NuGet packages from the ModVB NuGet feed when you want to use these features with the corresponding APIs.

If you want to use JSON literals and/or pattern matching with:

- Newtonsoft.Json.Linq types (

JObject/JArray/JContainer/JValue/JToken/JProperty)

Reference package: ModVB.Runtime.Extensions.NewtonsoftJson

- System.Text.Json types (

JsonElement/JsonProperty) or System.Text.Json.Nodes types (JsonObject/JsonArray/JsonValue/JsonNode)

Reference package: ModVB.Runtime.Extensions.SystemTextJson

More such libraries will be released for other JSON libraries in the coming weeks.

It’s worth noting that these are not mutually exclusive. You can reference as many extensions as are applicable to your project without conflict. Pattern matching only pays attention to the extensions required for the type being matched against and JSON literals are target-typed (more on that below).

JSON Literals

I talked about JSON literals in this blog post and this blog post. Please check them out. The scenarios still apply, and the behavior is still the same except the JSON literals in this mod are far beyond prototype quality. There are two new behaviors as well: flattening and target typing.

Flattening

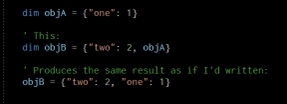

The properties of objA are “flattened” into objB (objA isn’t changed, of course). If instead of adding another object directly you add e.g. an IEnumerable(Of JObject), each JObject is flattened into the result in sequence:

The query yields a bunch of objects, but those objects are all flattened into the one outer object. This is a convenience feature particularly in LINQ as it allows you to yield a list of objects as a collection of properties, without requiring either an explicit syntax for a name-value pair or instantiating JProperty explicitly.

Target-typing

JSON Object expressions and JSON Array expressions are target-typed. Meaning, if you for example, assign a JSON literal expression to a variable or pass one as an argument to a parameter of type JObject/JArray (or one of their base types), the expression will construct that type. You can also use an explicit cast to determine the type of the resultant object.

Default JSON Object and Array types

In contexts where no target-type is available such as when using local or method type inference, or contests where the expression is being converted to Object, the expression will be evaluated as if it has the default JSON Object or Array type.

This lets you have a project that references multiple JSON APIs but doesn’t require explicit casts everywhere if one API is preferred. So, if for example, you have a project that predominantly uses the Newtonsoft JSON.NET types but you need to interoperate with APIs that work with the new System.Text.Json.Nodes types you would probably set your default object and array types to JObject and JArray and use target-typing in those specific times when you need to construct a JsonObject or JsonArray.

The default JSON Object type can be configured by setting the DEFAULT_JSON_OBJECT_TYPE preprocessor constant in your project settings to the fully qualified name of the type you wish to use. Likewise to set the default JSON Array type set the DEFAULT_JSON_ARRAY_TYPE constant.

If you didn’t know that VB pre-processing constants can have actual values—they can. A fact that I’m going to exploit heavily in future releases to avoid more cumbersome changes to MSBuild or the project system. Besides, after decades of being in the language, it’s time that capability start pulling its weight!

A few more notes: If this setting isn’t in your project settings the compiler defaults to the JSON.NET types because 2.17B downloads can’t be wrong and System.Text.Json.Nodes only exists in .NET 6 and later. I am thinking about making this default change based on whether you’re targeting .NET 6 and up or .NET Framework though.

The default JSON Object and Array types do not impact pattern matching except when trying to use an JSON object or array pattern against a value of certain types like Object or interfaces. In that situation you’re assumed to be testing the reference against the default object or array type and so the pattern will fail if the value is an object-typed reference to a different JSON library’s object or array type. To solve this you have to cast the value to at least the root of the correct type hierarchy (e.g. JToken/JsonNode) to let the compiler pick up the correct extensions.

Why Not Just Use Serialization?

On a previous post one or more commenters asked (paraphrased), “Why bother with this when everybody uses serialization?”

Well, for starters everyone isn’t using serialization. Only people who are using serialization NOW are using serialization. There are developers who aren’t exploring the power of the cloud or NoSQL database techniques at all and maybe this way of doing things makes it more appealing or more productive for them to do so, myself included.

I love types, I do. But honestly I’m a little fatigued on superfluous data transfer types that only exist to be serialized/deserialized. I had a project recently that was inundated with these anemic domain “models” for the sake of “doing it right” even in situations where no class was really needed.

For example, we had this dashboard API, part of which returned data to be rendered in the browser by a client-side JavaScript charting library in a SPA. There was absolutely NO value in trying to recreate the API of the JavaScript library. Ultimately, to the chagrin of my peers, I just constructed a returned a bunch of JObjects and JArrays matching what the charting library expected rather that “pretending” to build some rich type safe object model that was just going to get stringified immediately.

I worked on the Roslyn APIs for over half a decade; I believe in the benefits of types. But I also spent a good chunk of my career chasing after the ideal of “persistence ignorance”. The idea that domain objects are pure and free floating abstractions that are the true model of all software and the fact that objects happen to get saved to databases, or happen to get saved to the file system, or happen to get sent back and forth over the network via services is just an implementation detail. And in plenty of cases that’s absolutely true.

But there are also cases where the goal is to talk to a REST service you don’t own, or to manipulate some JSON in a database. If the currency of truth in an operation is JSON, it’s not the implementation detail, your POCOs are. The JSON is the whole point. Serialization classes are just something I would have done to make the SPA’s JavaScript charting library fit into a .NET world; I didn’t own that model.

And besides, if it’s true that serialization is the be all and end all of dealing with JSON no one would make types like those in the System.Text.Json.Nodes or Newtonsoft.Json.Linq namespaces. They exist both for convenience and because sometimes you’re working with highly heterogenous, schema-less data where the impedience mismatch between your data and the OOP world imposes a cost you deem not worth paying.

Another example that comes to mind is DataTables.

Wait, hear me out!

We’ve had typed DataSets in .NET for almost 2 decades now. Strongly typed. IntelliSense. All that. We’ve also had popular ORMs on .NET for almost as long. Yet often when I look on Facebook or a forum, there I see people using untyped datasets. Why?

My hypothesis is that when thinking about databases DataSets and DataTables are this remarkably minimal abstraction over the natural domain of a relational result set/table/row/column. They are to databases what HTML and CSS are to Web UI (as contrasted with the abstractions of Web Forms). Some people just like that thin abstraction, or need it, or benefit from it. My hypothesis is that untyped JSON objects are the analogous abstraction for the Cloud and NoSQL and that providing them will bring more developers to modern paradigms beyond the ones who are doing it now.

And then there are interactive and exploratory scenarios… if I’m in an interactive or exploratory context (and as part of this modding endeavor I plan to pursue more interactive and scripting contexts for VB.NET) I don’t want to jump through all the hoops of serialization to explore an API or a database. This convenience empowers me at different phases of my project or different activities on a project where speed and ease (for me) are paramount.

Sometimes when I’m mocking out a UI I don’t make any classes, I just whip out some XML literals and mock up an entire data model to bind to in XAML because that’s the fastest way to get a prototype going. And don’t even get me started on all of these reporting-only small classes that only exist for one UI or another, no domain purpose: OrderSlim, OrderSearchResult, OrderSummary, OrderInfo, OrderViewOnPage4. I’m very interested in exploring CQRS and I think raw JSON is a perfect fit for a lot of the Query responsibilities instead of all those fake domain objects.

In short, after all that rambling, there are tradeoffs and these features exist on a spectrum, and I’m making them to give the language more versatility and appeal to a broader set of users and scenarios. Serialization still cool.

What’s Next?

Why, “Wave 2” of course! It won’t be a big secret like “Wave 1”, I’m going to be upfront with what’s going to be in it but I need a little recovery time before getting started on that and to tend to other matters in my life which have gone neglected these last two months. I also plan to blog a little more about the contents of “Wave 1” and release a couple more NuGet packages with JSON literal and pattern matching support for a few more JSON APIs such as MongoDB and Oracle NoSQL.

- Read this post for instructions on how to install ModVB for yourself

- Try out the mod—do anything you’re interested in doing with the new features

- File bugs at https://github.com/modvb/backlog/issues

- Feedback and discussion on my blog, your blog, Twitter, YouTube, or wherever you hang out with other VB.NET enthusiasts!

- Don’t forget to SHARE!

You Anthony D are the future of VB.NET, don’t forget that!

LikeLike

Suppose we have this Json:

Dim glossary = {

$”title”: “Hello”,

“GlossList”: {

“GlossEntry”: {

“ID”: “SGML”,

“SortAs”: “SGML”,

“GlossTerm”: “Standard Generalized Markup Language”,

“Acronym”: “SGML”,

“Abbrev”: “ISO 8879:1986”,

“GlossDef”: {

“para”: “A meta-markup language, used to create markup languages such as DocBook.”,

“GlossSeeAlso”: [ “GML”, “XML” ]

},

“GlossSee”: “markup”

}

}

}

I tried this but it throws as exception:

Dim x = glossary.Descendants.Values(“GlossEntry”).First

I tried this and it works:

Dim result = glossary.Descendants().Where(

Function(o)

Select Case ShapeOf o

Case {

“GlossEntry”: {“ID”: id As String}

}

Console.WriteLine(id)

Return True

End Select

Return False

End Function

)

But obviously, things could be to be easier. We need to use the ShapeOf in if conditions, so, we can write:

Dim result = From o In glossary.Descendants()

Where ShapeOf o Is {

“GlossEntry”: {“ID”: id As String}

}

Select (o, id(

console.WriteLine(result.First.id)

LikeLike

ShapeOf in conditions is coming in a future release; it was just much faster to release the Select Case form first. I’m going to blog about this specifically in the next week.

LikeLike

There is a bug is the time is negative, fragment below works it ‘”relativeOffset” is positive but breaks if “relativeOffset”:-86301

“`

“sgs”: [

{

“sg”: 107,

“datetime”: “2021-05-21T01:08:00.000Z”,

“timeChange”: false,

“sensorState”: “NO_ERROR_MESSAGE”,

“kind”: “SG”,

“version”: 1,

“relativeOffset”: 86301

},

“`

Also, it only seems to work with NewtonSoftJson and not system.JSON, both work fine.

LikeLike

Thanks for reporting. I’ll look into this ASAP!

LikeLike

The both work fine comment might be confusing. You can install both but if you don’t install newtonsoft the program will not compile. This comment has nothing to do with – sign. Also I am very confused how to use this feature. After removing the – which isn’t needed. I converted the whole file with names and types. My select case has 1 case {entire converted json file}, this is a sample of the data I get from server. https://github.com/src/CareLink/SampleUserData.json. All the simple fields (name=“value”) work exactly as expected but I don’t understand how I use this to process arrays or arrays of arrays. Eventually the data ends up in one of 20 DataGridView and all the arrays of arrays plotted.

LikeLike

Is there a Git Repo or somewhere else to report bugs?

In the json stream I am getting dateTime, DateTIme, and datetime all have different meaning (GMT Time, local time, Unix Epoch Time…). Currently I use [] to preserve the casing and different conversion functions for each, is this possible? Also [] are not allowed in naming but it seems it’s OK to have a variable name of DateTime in the Select, is that correct?

LikeLike

https://github.com/modvb/backlog/issues

LikeLike

Pingback: 试用 ModVB(一):安装教程+使用 JSON 常量和 JSON 模式匹配 | Coding栈